One of the challenges we have with AI is that there isn’t any universal definition - it is a broad category that means everything to everyone. Debating the rights, and, the wrongs, and the should’s and the shouldn’t s is another post though.

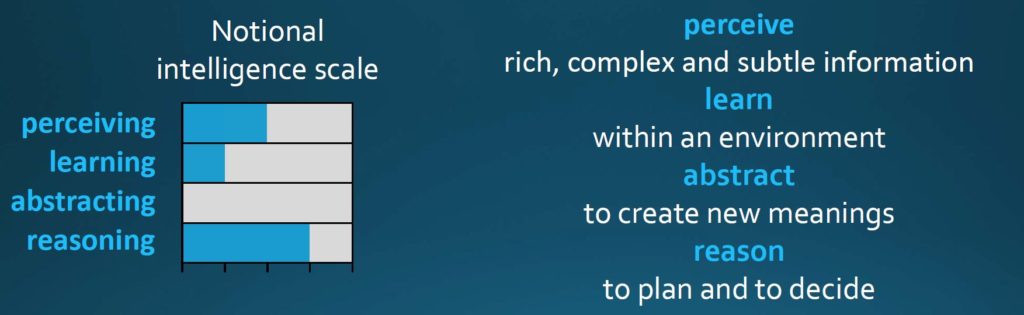

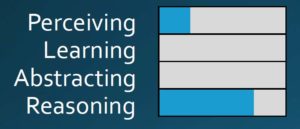

DARPA outlines this as the “programmed ability to process information” and across a certain set of criteria that span across perceiving, learning, abstracting, and, reasoning.

They classify AI in three waves - out outlined below. Each of these is at a different level across the intelligence scale. I believe it is important to have a scale such as this - it will help temper expectations and compare apples to apples; and for enterprises it will help create roadmaps on outcomes and their implementations; and finally help cut through the hype cycle noise that AI has generated.

Wave 1 - Handcrafted Knowledge

The first wave operates on a very narrow problem area (the domain) and essentially has no (self)learning capability. The key area to understand that the machine can explore specifics, based on the knowledge and related taxonomy/ structure which is defined by humans. We create a set of rules to represent the knowledge in a well-defined domain.

Of course as the Autonomous grand challenge taught us - it cannot handle uncertainty.

Wave 2 - Statistical Learning

The second wave, has better classification and prediction capabilities - a lot of which is via statistical learning. Essentially problems in certain domains are solved by statistical models - which are training on big data. It still doesn’t have contextual ability and has minimal reasoning ability.

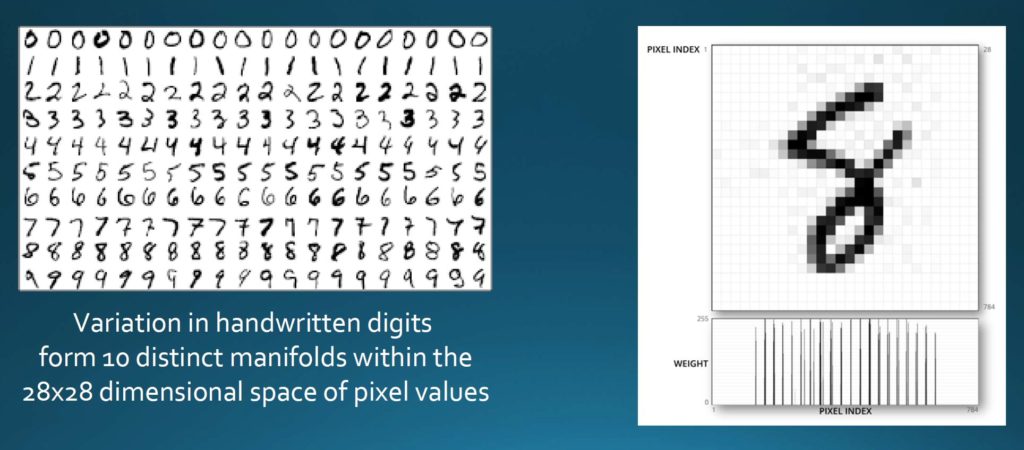

A lot of what we are seeing today is related to this second wave; and one of the hypothesis holding this up is called manifold hypothesis. This essentially states that high dimension data (e.g. images, speech, etc.) tends to be in the vicinity of low dimension manifolds.

A manifold is an abstract mathematical space which, in a close-up view, resembles the spaces described by Euclidean geometry. Think of it as a set of points satisfying certain relationships, expressible in terms of distance and angle. Each manifold represents a different entity and the understanding of the data comes by separating the manifolds.

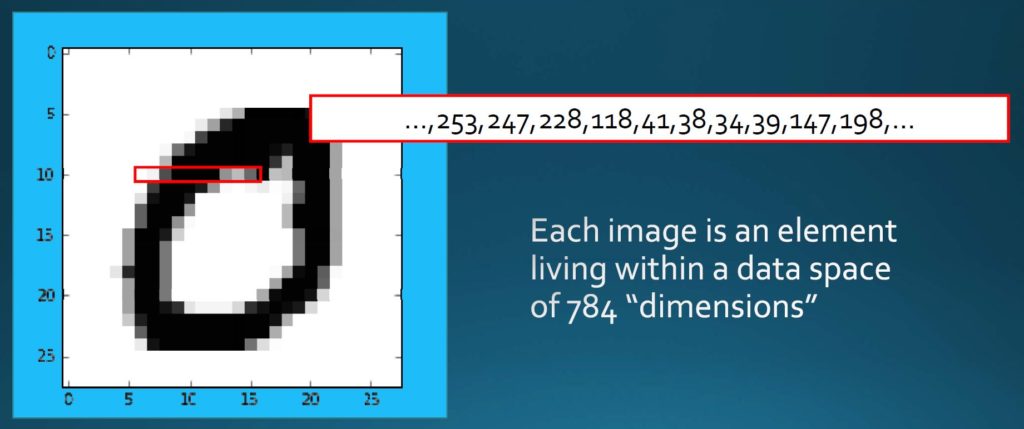

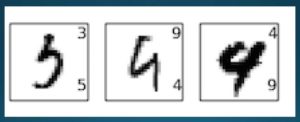

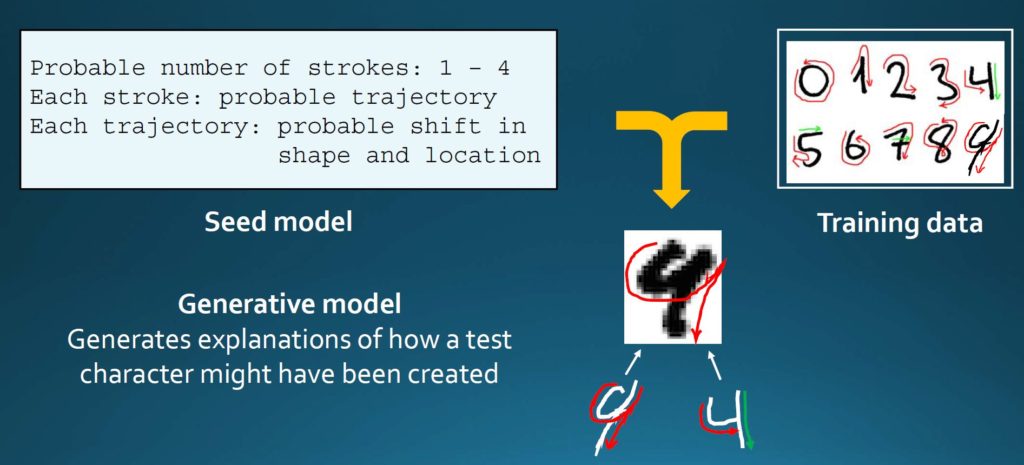

Using handwriting digits as an example - each image is one element in a set which has 784 dimensions, which form a number of different manifolds.

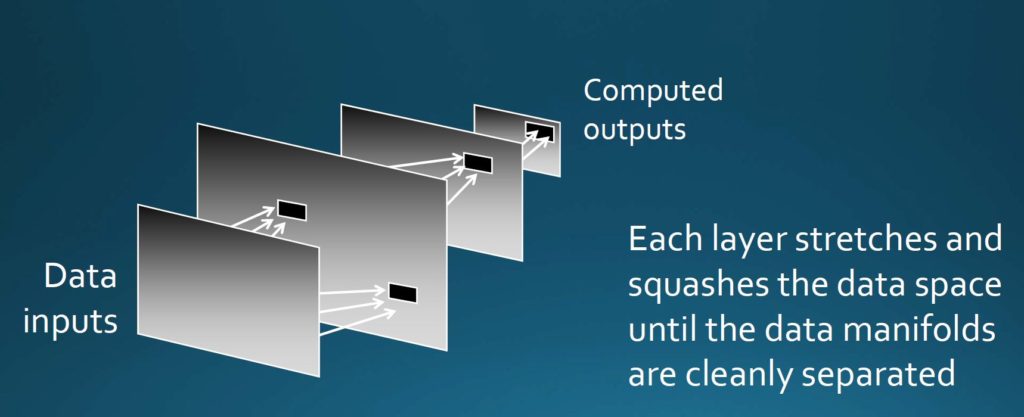

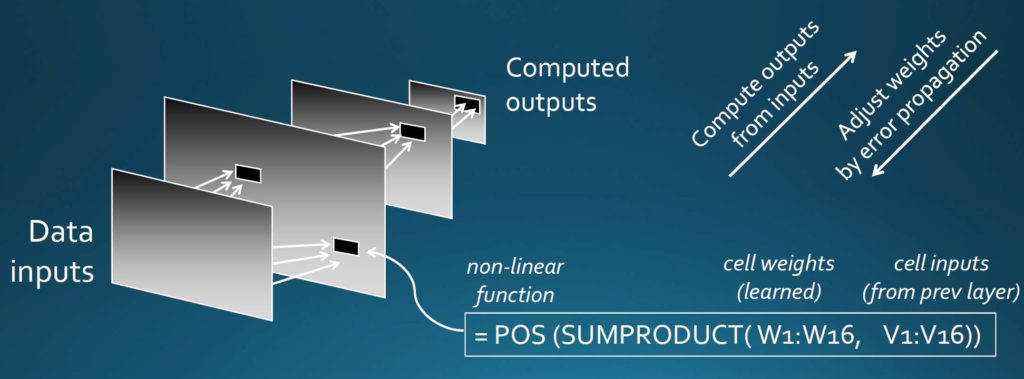

Separating each of these manifolds (by stretching and squishing of data) to get them isolated is what makes the layers in a Neural net work. Each layer in the neural network computes its output from the preceding layer of inputs (implemented usually by a non-linear function) - learning from the data.

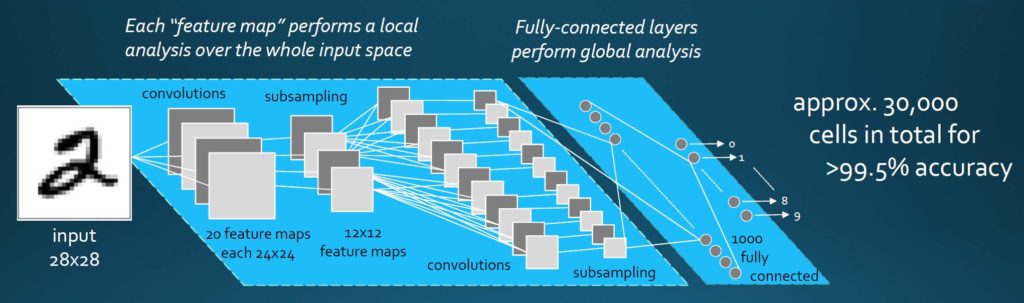

So, in statistical learning, one would design and program the network structure based on experience. Here is an example of how the number 2 to be recognized goes through the various feature maps.

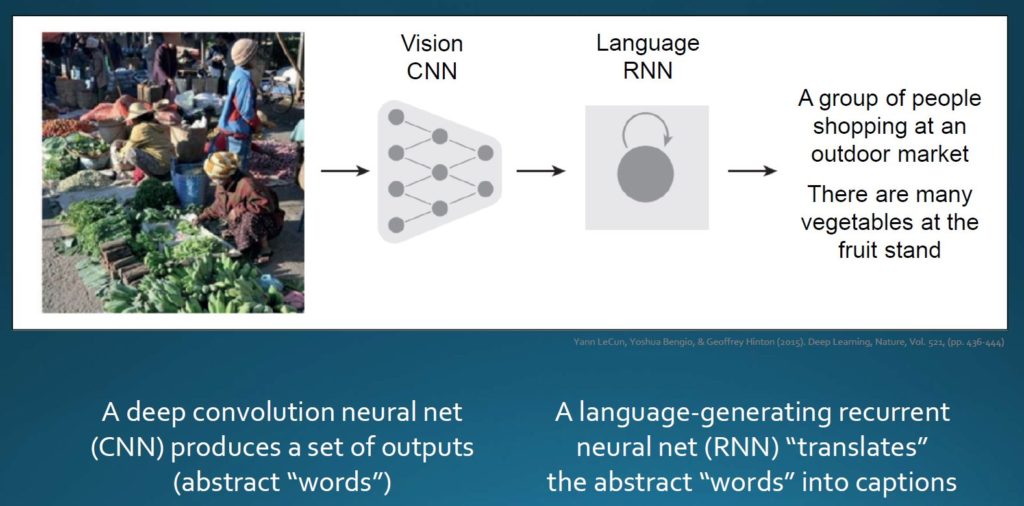

And one can combine and layer the various kinds of neural networks together (e.g. a CNN + RNN).

And whilst it is statistically impressive, it is also individually unreliable.

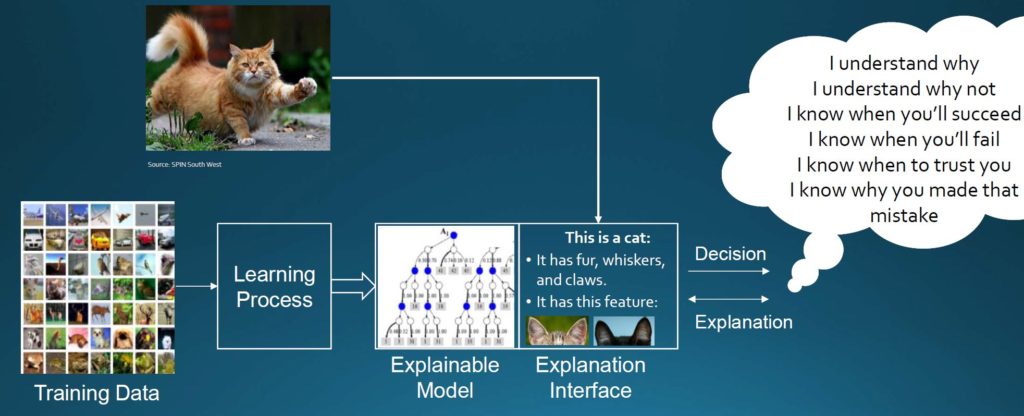

Wave 3 - Contextual Adaptation

The future on AI, is what DARPA is calling Contextual adaptation - where models explain their decisions, which is then used to drive further decisions. Essentially one ends up in this world where we construct contextual explanatory models that are reflective of real world situations.

In summary, we are in the midst of Wave 2 - which is already very exciting. For an enterprise, it is key to have a scale that outlines the ability to process information across the intelligence scale to help make this AI revolution more tangible and manageable.

PS - if you want to read up more on manifold hypothesis and how they play in neural networks, I would suggest reading Chris’s blog post .